May 14, 2008

Case study in risk management: Debian's patch to OpenSSL

Ben Laurie blogs that downstream vendors like Debian shouldn't interfere with sensitive code like OpenSSL. Because they might get it wrong... And, in this case they did, and now all Debian + Ubuntu distros have 2-3 years of compromised keys. 1, 2.

Further analysis shows however that the failings are multiple, are at several levels, and they are shared all around. As we identified in 'silver bullets' that fingerpointing is part of the problem, not the solution, so let's work the problem, as professionals, and avoid the blame game.

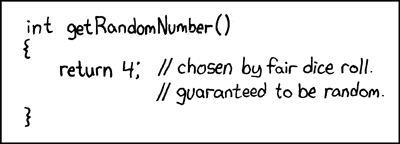

First the tech problem. OpenSSL has a trick in it that mixed in uninitialised memory with the randomness generated by the OS's formal generator. The standard idea here being that it is good practice to mix in different sources of randomness into your own source. Roughly following the designs from Schneier and Ferguson (Yarrow and Fortuna), modern operating systems take several random things like disk drive activity and net activity and mix the measurements into one pool, then run it through a fast hash to filter it.

What is good practice for the OS is good practice for the application. The reason for this is that in the application, we do not know what the lower layers are doing, and especially we don't really know if they are failing or not. This is OK if it is an unimportant or self-checking thing like reading a directory entry -- it either works or it doesn't -- but it is bad for security programming. Especially, it is bad for those parts where we cannot easily test the result. And, randomness is that special sort of crypto that is very very difficult to test for, because by definition, any number can be random. Hence, in high-security programming, we don't trust the randomness of lower layers, and we mix our own [1].

OpenSSL does this, which is good, but may be doing it poorly. What it (apparently) does is to mix in uninitialised buffers with the OS-supplied randoms, and little else (those who can read the code might confirm this). This is worth something, because there might be some garbage in the uninitialised buffers. The cryptoplumbing trick is to know whether it is worth the effort, and the answer is no: Often, uninitialised buffers are set to zero by lower layers (the compiler, the OS, the hardware), and often, they come from other deterministic places. So for the most part, there is no reasonable likelihood that it will be usefully random, and hence, it takes us too close to von Neumann's famous state of sin.

OpenSSL does this, which is good, but may be doing it poorly. What it (apparently) does is to mix in uninitialised buffers with the OS-supplied randoms, and little else (those who can read the code might confirm this). This is worth something, because there might be some garbage in the uninitialised buffers. The cryptoplumbing trick is to know whether it is worth the effort, and the answer is no: Often, uninitialised buffers are set to zero by lower layers (the compiler, the OS, the hardware), and often, they come from other deterministic places. So for the most part, there is no reasonable likelihood that it will be usefully random, and hence, it takes us too close to von Neumann's famous state of sin.

Secondly, to take this next-to-zero source and mix it in to the good OS source in a complex, undocumented fashion is not a good idea. Complexity is the enemy of security. It is for this reason that people study the designs like Yarrow and Fortuna and implement general purpose PRNGs very carefully, and implement them in a good solid fashion, in clear APIs and files, with "danger, danger" written all over them. We want people to be suspicious, because the very idea is suspicious.

Next. Cryptoplumbing involves by its necessity lots of errors and fixes and patches. So, bug reporting channels are very important, and apparently this was used. Debian team "found" the "bug" with an analysis tool called Valgrind. It was duly reported up to OpenSSL, but the handover was muffed. Let's skip the fingerpointing here, the reason it was muffed was that it wasn't obvious what was going on. And, the reason it wasn't obvious looks like the code was too clever for its own good. It tripped up Valgrind (and Purify), it tripped up the Debian programmers, and the fix did not alert the OpenSSL programmers. Complexity is our enemy, always, in security code.

So what in summary would you do as a risk manager?

- Listen for signs of complexity and cuteness. Clobber them when you see them.

- Know which areas of crypto are very hard, and which are "simple". Basically, public keys and random generation are both devilish areas. Block ciphers and hashes are not because they can be properly tested. Tune your alarm bells for sensitivity to the hard areas.

- If your team has to do something tricky (and we know that randomness is very tricky) then encourage them to do it clearly and openly. Firewall it into its own area: create a special interface, put it in a separate file, and paint "danger, danger" all over it. KISS, which means Keep the Interface Stupidly Simple.

- If you are dealing in high-security areas, remember that only application security is good enough. Relying on other layers to secure you is only good for medium-level security, as you are susceptible to divide and conquer.

- Do not distribute your own fixes to someone else's distro. Do an application work-around, and notify upstream. (Or, consider 4. above)

- These problems will always occur. Tech breaks, get used to it. Hence, a good high-security design always considers what happens when each component fails in its promise. Defence in depth. Systems that fail catastrophically with the failure of one component aren't good systems.

- Teach your team to work on the problem, not the people. Discourage fingerpointing; shifting the blame is part of the problem, not the solution. Everyone involved is likely smart, so the issues are likely complex, not superficial (if it was that easy, we would have done it).

- Do not believe in your own superiority. Do not believe in the superiority of others. Worry about people on your team who believe in their own superiority. Get help and work it together. Take your best shot, and then ...

- If you've made a mistake, own it. That helps others to concentrate on the problem. A lot. In fact, it even helps to falsely own other people's problems, because the important thing is the result.

[1] A practical example is that which Zooko and I did in SDP1 with the initialising vector (IV). We needed different inputs (not random) so we added a counter, the time *and* some random from the OS. This is because we were thinking like developers, and we knew that it was possible for all three to fail in multiple ways. In essence we gambled that at least one of them would work, and if all three failed together then the user deserved to hang.

You're missing an important part of the tech issue.

OpenSSL does this, which is good, but may be doing it poorly. What it (apparently) does is to mix in uninitialised buffers with the OS-supplied randoms, and little else (those who can read the code might confirm this).

The Debian patch in question (http://svn.debian.org/viewsvn/pkg-openssl/openssl/trunk/rand/md_rand.c?rev=141&view=diff&r1=141&r2=140&p1=openssl/trunk/rand/md_rand.c&p2=/openssl/trunk/rand/md_rand.c) modifies two places in the code. One of them does as you suggest (mixing in uninitialized stack data); it was already annotated with #ifndef PURIFY, and is not a correctness issue. The bug report (http://bugs.debian.org/cgi-bin/bugreport.cgi?bug=363516) from rjk@ only talks about fixing that one, but kurt@ generalized to another place (line 247 in ssleay_rand_add) which is the same line of code, but in a completely different context and extremely critical -- commenting it out turned ssleay_rand_add() into a noop!

As far as what needs to be done, or what lessons can be learned -- obviously this should be a wakeup call to distributors that they need to push patches upstream. Hopefully upstream authors will take this opportunity to work more closely with downstream as well. And it's important that people testing security (for example, testing RNGs as used in the field) test the software that people actually use rather than the ivory-tower upstream code.

Posted by: Andy at May 14, 2008 04:54 PMI presume you meant to write "What is good practice for the OS is _not_ good practice for the application"?

Posted by: Frank Hecker at May 14, 2008 04:54 PMSorry, ignore my previous comment. I misread your meaning; you simply meant that it's good practice for applications to use the same techniques as are used in the OS.

Posted by: Frank Hecker at May 14, 2008 09:51 PMNow that I had to change a bunch of certificates, I used openssl's feature of taking randomness from external files, which I filled with random data from different sources (OS, gpg, microphone, etc.). Each time I needed to generate a new key, I collected randomness first like this.

What is good practice for the OS is good practice for the application is good practice for the user.

Posted by: Daniel Nagy at May 15, 2008 05:30 AMHi Ian,

Your FC diary does not appear to be accepting comments at the moment. Other interesting aspects of this affair: You hinted, in passing, that the OpenSSL code is unreadable. Shouldn't that assertion be at the top of your list?

To "those who can read the code," one might add "those who can compile the code." The problematic patch on May 2, 2006 also added a nested comment, which wasn't fixed until September 17, 2006. In a way, this may be a good thing; nobody can have been using this revision for the duration.

Finally, in the advisory it is mentioned that all DSA keys ought to be replaced, since the DSA handshake uses "random" data generated by the offending code, and the result would be susceptible to a known plaintext attack. This is true even if the DSA key predates May 2006. But Debian's update will only replace a DSA key that itself is bad.

Posted by: Felix at May 15, 2008 07:05 PM> OpenSSL does this, which is good, but may be doing it poorly. What it

> (apparently) does is to mix in *uninitialised buffers* with the

> OS-supplied randoms, and little else (those who can read the code might

> confirm this).

This is a misunderstanding. OpenSSL was simply not zeroing out buffers before using them to carry random data from the caller and stuff it into the entropy pool. Make sense? It has a temp buffer to hold data which is on its way to the entropy pool, and it doesn't bother to zero out that buffer (or the unused end of it).

A Debian developer falsely thought that the entire buffer held nothing but uninitialized RAM in all cases.

Here is a nice summary of the code failure:

http://lwn.net/SubscriberLink/282230/8c93c55edd44ccdc/

And here is a nice summary of the communication failure:

http://lwn.net/SubscriberLink/282038/ed89274bd6da5d90/

A deliberately fingerpointing article about the issue for the dull rainy hours:

http://www.gergely.risko.hu/debian-dsa1571.en.html

After looking at the actual original code in http://svn.debian.org/viewsvn/pkg-openssl/openssl/trunk/rand/md_rand.c?rev=141&view=diff&r1=141&r2=140&p1=openssl/trunk/rand/md_rand.c&p2=/openssl/trunk/rand/md_rand.c it became clear why developers can be misled. There is no comment in the code documenting the use of uninitialized memory to increase randomness! In that respect, I agree with comment 10 in http://www.links.org/?p=327 that openssl developers did not do their part to prevent this whole drama from happenning.

Posted by: anon_concern at June 1, 2008 01:38 PM